Camera Sensor Formats – Why Size Matters

Huge bulky full-frame DSLRs with gazillions of megapixels once used to be synonymous with top-notch photo quality. That is no longer the case. More and more photography amateurs have started to appreciate the importance of a digital camera’s image sensor and comprehend the impact it has on their photographs. Fact is, the quality, size and make up of the image sensor, combined with the lens and image processor, play a massive role in the image quality, and, ultimately, the end result.

In this article, we’ll discuss the very fundamentals of an image sensor, and why it is important to understand its role in digital photography.

What Is A Digital Image Sensor?

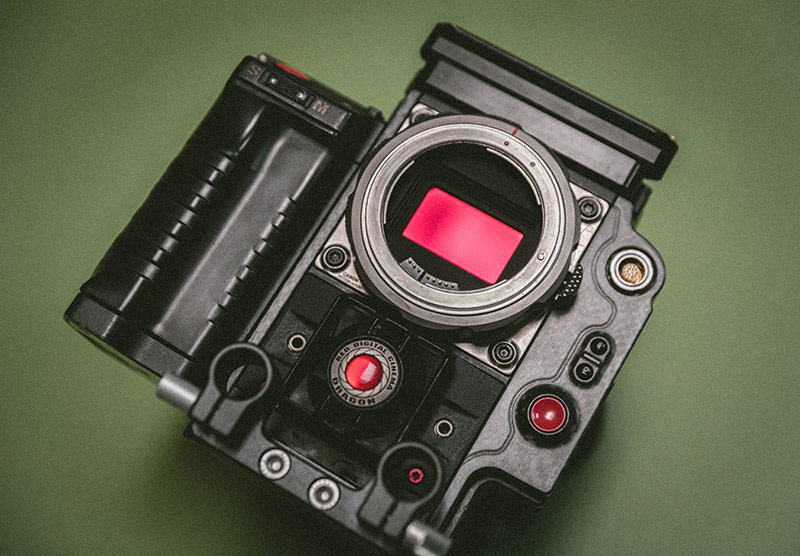

Each and every digital camera, whether it’s a gigantic professional full-frame DSLR, or a small built-in mobile phone camera, is equipped with a digital image sensor. The sensor, which resembles a tiny chip, is a solid-state device, and sits in the heart of the device, just behind the lens. The sensor acts like a film, detecting and capturing all the data that is required in order to form a photograph.

Removing your lens will allow you to see your sensor.

How Does A Digital Image Sensor Work?

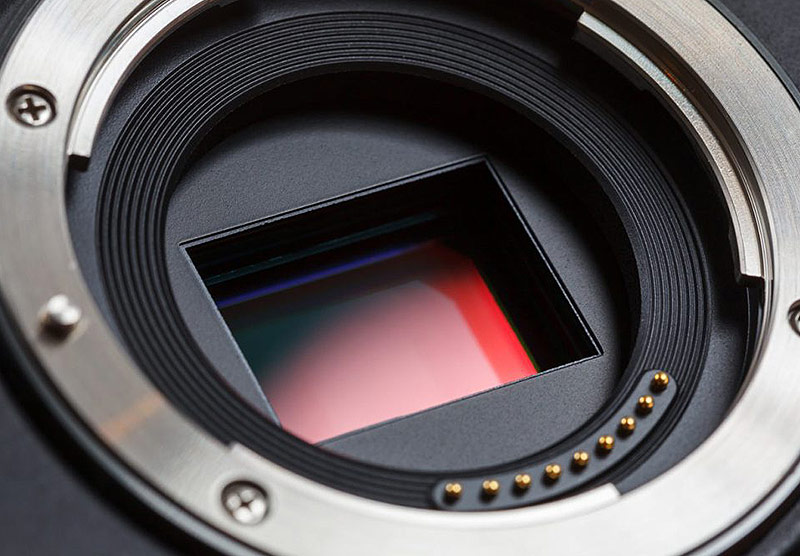

Very simplified, a digital image sensor is made up of three layers. The base layer is called the sensor substrate and is often times made out of silicone. The surface of the sensor substrate is not completely flat, instead, it is covered by millions and millions of microscopic photo-sensitive “cavities” – referred to as photosites. Each individual photosite represents a pixel in the final photograph.

1) The Photosites Collect Light

When you press the shutter release button and the exposure process begins, the camera’s shutter curtains open briefly, allowing light to flood through the photographic lens onto the image sensor. Each photosite on the surface of the image sensor collects a certain amount of light particles (light photons), and records the intensity of light, i.e. the number of photons that fell onto it. The amount of collected photons is based on factors such as the length of the exposure (i.e. the shutter speed you chose) and the light characteristics of the scene.

When the shutter curtains finally close and the exposure comes to an end, the photons from each photosite are counted and converted into a digital number that represents the brightness of a single pixel. Together with the rest of the pixels captured by all of the other photosites on the surface of the sensor, the image sensor circuitry reads all this information, and turns all the raw data into a full-coloured grid of pixels that ultimately constitute a photograph.

The number of photons collected by a photosite is proportional to the light intensity. The more photons that hit a photosite, the brighter the pixel will be. It is correct to assume that photosites recording light from highlights will contain many photons, while the photosites recording light from shadows will contain fewer photons. If the photosites haven’t captured any photons whatsoever, the pixel will be black, if the photosite is completely full, the pixel will be white. Once a photosite has collected the maximum amount of photons (as in; once the cavity is full and no longer can fit more photons) the pixel will be “blown” – completely white (think of a blown-out area in a photograph).

The photosites can only measure the number of photons it has collected, i.e. the intensity of the light, however, it can not determine the colour of those photons. In other words, the photosites covering the surface of the sensor can only record in monochrome.

A close-up of an image sensor on a camera.

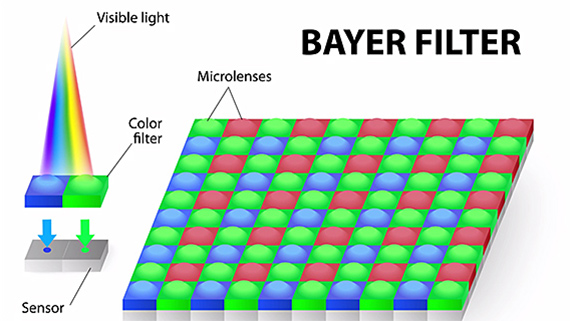

2) The Bayer Filter Prevents Certain Types Light From Entering The Photosite

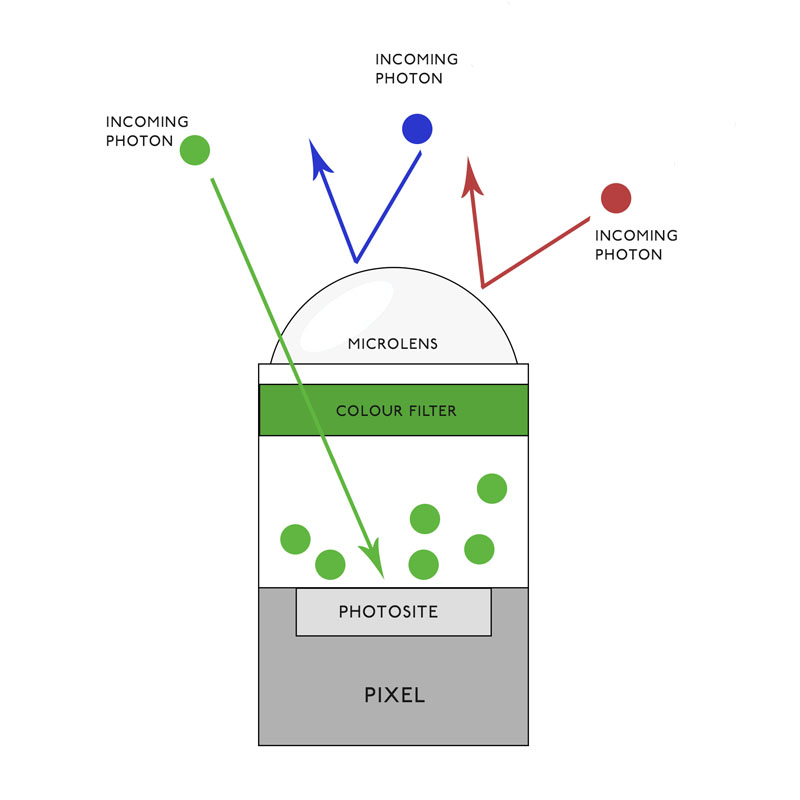

That is where a second layer of a sensor, the Bayer filter, comes into play. The Bayer filter is also referred to as the RGBG filter, or the Colour Filter Array (CFA for short). The Bayer filter isn’t actually a part of the sensor, so it wouldn’t be factual accurate to call it a layer of the sensor. The Bayer filter is actually a filter bonded to the sensor substrate, where it acts as a shield, allowing only photons of a certain colour into each photosite.

Does this sound confusing? Think of it this way … The Bayer filter consists of alternating rows of red and green filters and blue and green filters. The red filters will only allow red photons to pass through it and onto the photosite, the green will only capture green photons, and the blue will only capture blue photons. Thus, blue light can never penetrate a red filter and vice versa.

Thanks to the Bayer filter, the processor (which handles the colour calculation), will have the ability to not only measure the number of photons is has collected, but also the number of each red photon, each green photon, and each blue photon. If a photosite with a red filter above it has collected 1,000 photons, the processor is able to deduce that these photons are all red, thus it can measure the level of red light at that certain pixel in the photograph.

3) The Microlens Increase the Photon Collection Area of the Pixel

Continuing, the third layer of the sensor, the microlens, sits atop the Bayer filter. Its role is to assist each photosite with collecting the maximum amount of light. If you visualize the construction of a sensor and its photosites, try to visualize a gap between each photosite. The photosites are not placed exactly site by side, in contrast, there is a small gap between each photosite.

That means that any light that hits to the gap as opposed to the photosites will not be used for exposure of the photograph. The purpose of the microlens is to eradicate this light waste, which it does by leading the wasted light (that would’ve otherwise hit the gap) into a photosite. In other words, the microlens effectively increases the quantum efficiency of the pixel.

Pictured above is an illustration of a photosite with a green colour filter laying on top of it.

Aspect Ratio

When speaking about the aspect ratio of a sensor, we are speaking about the ratio of the sensor’s width to height. The aspect ratio dictates the proportions of the image produced by the camera. A square has an aspect ratio of is 1:1 (e.i. equal width and height), whilst a 35mm film has an aspect ratio of 1.5:1 (1,5 times wider than it is high). Most image sensors fall in between these two aspect ratios.

To measure the aspect ratio of your camera, divide the larger number in the image resolution by the smaller number. If your image is sized 800×533, the aspect ratio is 1.5.

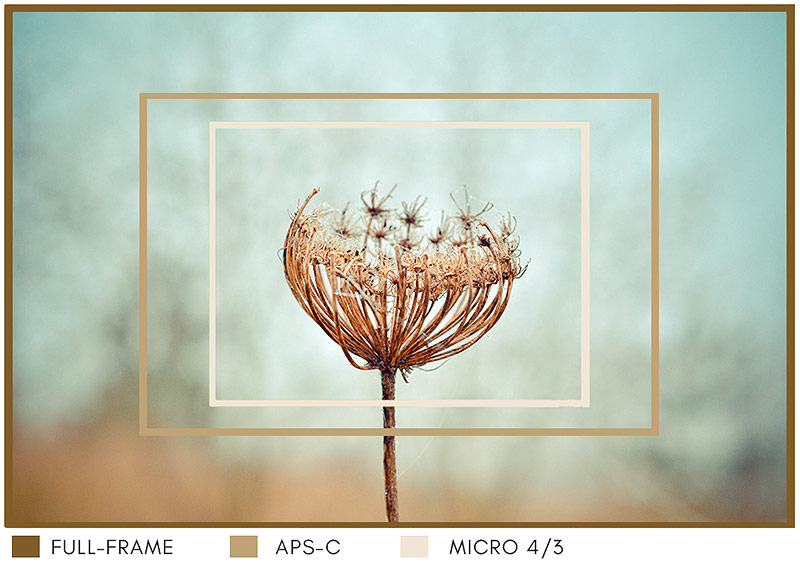

What different-sized sensors such as the Full Frame, APS-C, and Micro 4/3 would’ve captured using the same lens.

Three Popular Sensor Formats & Aspect Ratios

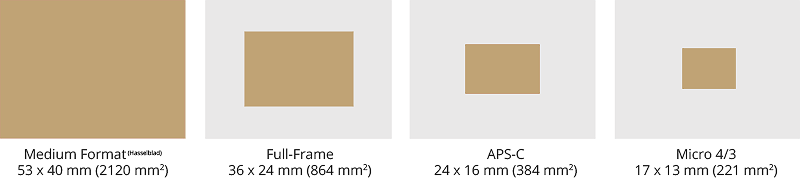

Digital image sensors are available in a variety of sizes. There are primarily three different image sensor formats that are used in the DSLRs and MILCs on the consumer market. The biggest is a full-frame sensor (36 x 24 mm), followed by the smaller APS-C sensor (usually 24 x16 mm), and lastly, the Micro 4/3 sensor being the smallest of the three (17 x 13 mm).

DSLRs are equipped with either a full-frame or an APS-C sensor, whilst MILCs are equipped with either of the three sensor formats. The medium-format is yet another type of sensor format, significantly larger than any of the three, and is usually found in the most expensive cameras. Due to its extreme rarity among traveling photographers, we will not delve deep into the medium format, but merely touch upon it.

Full-frame sensors are mainly used in professional cameras, such as flagship models in many manufacturers’ lines. The large large surface area of a full-frame sensor enables them to capture a lot of light, resulting in high quality images. As we mentioned earlier, a full-frame sensor camera can be mirrorless or a DSLR.

APS-C sensors (also referred to as cropped) are to be found in both DSLRs and MILCs, and were initially used mostly in entry-level and mid-range system cameras, although nowadays there are compact cameras outfitted with a cropped sensor. An APS-C sensor provides an excellent blend of portability, image quality and flexibility in regards to interchangeable lenses. It is important to note that not all APS-C sensors are equal in size. Canon’s APS-C sensors are smaller (23x15mm) than the the sensors found in Nikon and Sony (24x16mm) cameras.

Lastly, the Micro 4/3 sensor is exclusive to the mirrorless cameras, oftentimes found in Olympus and Panasonic cameras. There are no DSLR cameras outfitted with this type of sensor. With a surface area of 220 mm², the M4/3 sensor is almost four times smaller than the full-frame sensor, and about 30% smaller than the APS-C sensor.

Illustration of different sensor sizes (according to scale).

Sensor Size Does Matter

Image quality isn’t really dependent on the type of camera (e.g. mirrorless vs DSLR) you use, but rather on factors such as the image sensor format, the camera’s processor, and the lens. The sensor format, and to a certain extent; the megapizel count, determines the general quality of a photograph. In addition, the the sensor also determines the how well the camera performs in low light conditions.

It is important to understand that the size of a sensor is not relevant to the number of megapixels. A larger sensor is made up of larger photosites, hence a larger sensor will be able to collect more photons with less noise and greater range of tones – which translates into a more detailed, sharper and brighter photograph.

If you have two cameras with the same amount of megapixels, but different sensor sizes, the one with the larger sensor size will produce better photographs. Similarly, a 10 megapixel full frame sensor will still be physically larger than a 12 megapixel APS-C sensor, and the larger 10 megapixel sensor will produce better photographs than a smaller 12 megapixel sensor. Why? The larger sensor contains larger photosites, so each individual photosite will be are able to collect more raw data than the smaller photosites of the smaller sensor. The more raw data each photo has, the more malleable it is in post-production.

Summarizing the above, it stands to reason that a larger sensor will produce photos that have more depth of field, better bokeh, more width, more dynamic range and less noise than a smaller sensor.

Enjoyed this article? Pin Me!

Enjoyed this article? Pin Me!

Found this article informative? Feel free to pin this article and sign up to our newsletter to receive our next article, Your Next Travel Camera: The 5 Most Important Features.

15 Comments

-

-

robin rue

I have a Cannon Rebel and I go to YouTube when I need to know how to get a shot of something I want. Love all the videos on there.

-

Stefanie

This is such an interesting post! I´m just starting to explore the world of photography and cameras. Right know the big sensor is maybe not needed for me, but I hope that I´ll get there one day 🙂

-

Patricia

im rarely behind the camera but knowing how it works is always helpful to both the photographer and model. This is an informative article, thanks!

-

Heather Johnson

I must admit that I had never heard of a digital image sensor before. This is totally helpful because I have been trying to improve my photo skills.

-

Denay DeGuzman

I love this article! So incredibly interesting to learn about camera sensor formats. Thanks so much for sharing your expertise!

-

Ana De- Jesus

You know what I never took ‘image sensor’ into account. You see my phone has 23 mp (its the Sony Experia XZ) but I am not sure about the image sensor. Do you know by any chance? x

-

Kitty

Wow thanks for sharing this post… I had no idea about all these though I use a camera in my day to day life… This is sooo informative 😀

-

Angela Ricardo Bethea

It’s great to learn something about photography and camera tips. Reading this will surely help me with my photos a little better than before.

Blanca N Valbuena

I gave up my slr a few years back because it was just so clunky and my iPhone took such great pics, but after reading this post, I see what I am missing. I may have to put a new digital camera on my holiday wishlist.